Hearing Substitution Using Haptic Feedback

Exploring AAC to substitute hearing through Neosensory Buzz's haptic feedback for deaf parents to connect to their kids.

Ever wonder how difficult it is for deaf people parenting hearing and speaking children, since infant to teen and beyond?

Parents face unique challenges in each phase of parenting.

Newborn babies do not speak. Only communication they know is to cry. They cry when hungry, they cry when fussy, they cry when in pain. Imagine a deaf parent, little way from babies crib, they hear nothing! Baby's cry is unheard. Baby might be in real pain or discomfort which needs immediate attention but her scream will be unheard. How about a device which will listen to baby's cry and notify deaf parents via haptic feedback on a wearable device in real time?

Pre-schoolers are different than newborns. They learn new ways of communication, they learn to speak but problem with deaf parents remain the same. They are not able to hear what their little ones are trying to communicate. But on a positive side, kids can learn to use smart phone or tablets and can distinguish different image or icons. Yes, I am talking about Augmentative and Alternative Communication (AAC) for deaf parents not hearing impaired children!

How about an app running on a tablet through which young children can communicate with their deaf parents? App will send haptic signal to a wearable device and deaf parent would be able to recognize the signal pattern.

Older children may prefer to just talk instead of tapping on icons. How about mapping similar actions through voice?

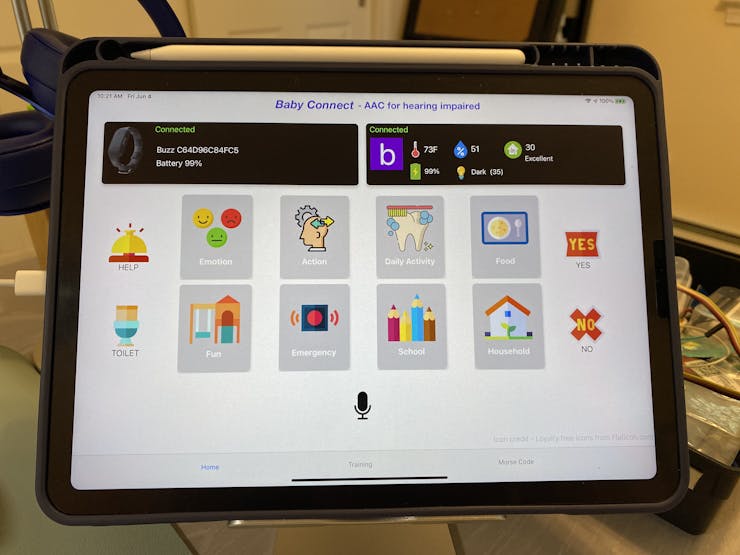

Baby Connect

Introducing "Baby Connect", an app running on tablet. App is connected to Neosensory Buzz over the bluetooth and sends haptic feedback via vibration when an icon is tapped on the screen or a word is spoken holding down the on-screen record button.

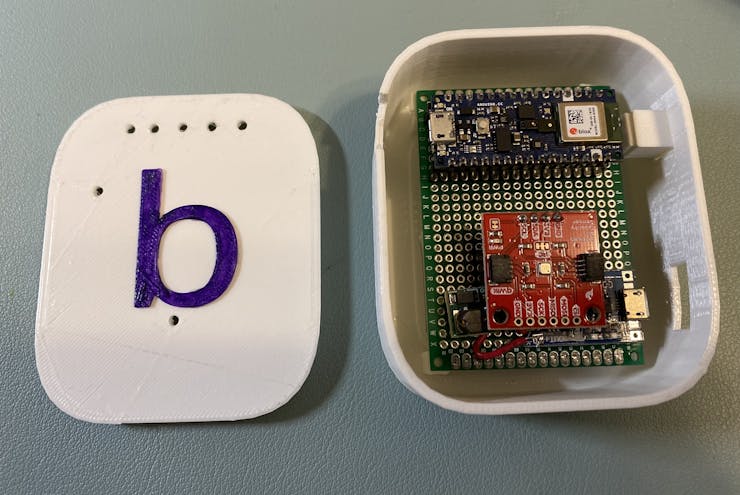

The system comes with a device equipped microphone and environment sensor to monitor IAQ ( Indoor Air Quality), temperature, humidity, ambient light. Microphone records surrounding sounds and analyze them using machine learning algorithm to determine is baby is crying.

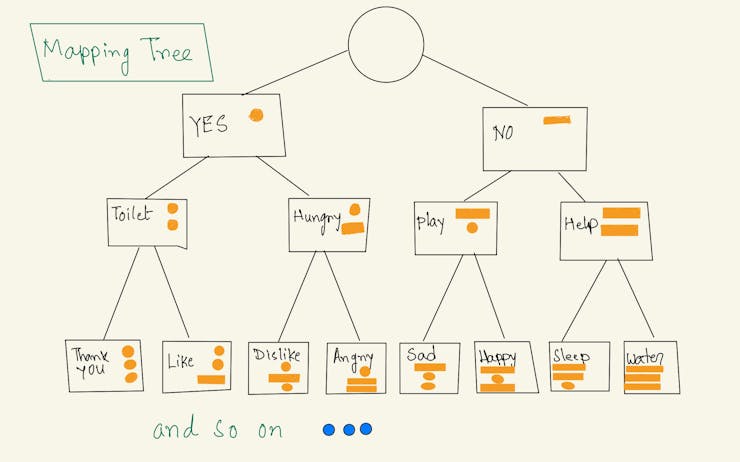

Mapping actions to vibration After combining the concept of AAC and Morse code, I mapped each AAC actions ( such as Like, Thank You, Hungry etc ) to a visual pattern with DOT and DASH. One difference from Morse code is that, in Morse code, signals are sequential such as DOT DOT DOT ( S) or DASH DASH DASH ( O) but here, as we have Buzz with 4 motors which can vibrate independently, we have 4 channels. So "LIKE" is represented by DOT, DOT, DASH ( motor 1, 2 and 3 vibrating simultaneously)

DOT is represented by low frequency 50 and DASH is presented by high frequency 255. So above "LIKE" action is represented by [50, 50, 255, 0] and repeat 40 times ( 40 frames )

Similarly I have mapped several other AAC actions to vibration as shown in above image.

Checkout the full project code and instruction on Hackster.io